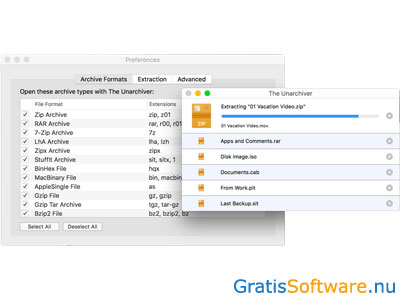

Compared to the Archive Utility, The Unarchiver is a complete tool that gives you additional functionality when it comes to unpacking your archives. This app allows you to create, open, and extract RAR files, as well as almost any other kind of archive formats on your computer. If you need to open or extract RAR files on Mac, one of the best options is The Unarchiver.

Plus, it can only handle a limited amount of archive formats. However, it doesn’t give you much control over the process. Mac has a native program hidden in a system folder called Archive Utility that allows you to create compressed files and manage various archives. To extract the contents of your RAR file, right-click it and select 7-Zip > Extract. You can do this with or without opening the 7-Zip app first. After that, you can double-click any RAR file to open it and extract its contents. Once the result is passed via the response, the Lambda function can hand off the processing to another AWS process.To get started with 7-Zip, you need to download the software from the website and install it on your Windows computer. This example wraps the data in a StringIO object and uses a CSV reader to handle the data. Keep in mind, your needs may be different. I have included the next step of reading the CSV files and returning the data and a status code 200 in the response. My example assumes you have one or a few small csv files to process and returns a dictionary with the file name as the key and the value set to the file contents. If the archives have more than one file inside, you will need logic for handling each one. You will need to figure out what to do from here for your specific use case. Once you have the data passed to ZipFile, you can call read() on the contents. You will need to process the contents correctly, so wrap it in a BytesIO object and open it with the standard library's ZipFile, documentation here.

Working on an archive in memory requires a few extra steps. Use the read() method, passing an amt argument if you are processing large files or files of unknown sizes. The response body will be an instance of a StreamingBody object which is a file like object with a few convenience functions. The code above accesses the file contents through response where response is an event triggered by S3. Make sure they exist and your bucket is in the same region as this function.".format( Input_zip = ZipFile(io.BytesIO(contents)) To open the archive, process it, and then return the contents you can do something like the following. If the function is initiated via a trigger, Lambda will suggest that you place the contents in a separate S3 location to avoid looping by accident. Open the archive and decompress it (No need to write to disk).Connect to S3 (I connect the Lambda function via a trigger from S3).If keeping the data in AWS is the goal, you can use AWS Lambda to: However, this is quite an elaborate way of avoiding downloads, and probably only worth it if you need to process large numbers of zip files! Note also that (as of Oct 2018) Lambda functions are limited to 15 minutes maximum duration ( default timeout is 3 seconds), so may run out of time if your files are extremely large - but since scratch space in /tmp is limited to 500MB, your filesize is also limited. See the AWS Lambda walkthroughs and API docs. If the file is already there, you may need to trigger it manually, via the invoke-async command provided by the AWS API. See the FAQs.įinally, you need to find a way to trigger this code - typically, in Lambda, this would be triggered automatically by upload of the zip file to S3. This processing will take place on AWS infrastructure behind the scenes, so you won't need to download any files to your own machine. You would need to create, package and upload a small program written in node.js to access, decompress and upload the files. You may be able to perform remote operations on the files, without downloading them onto your local machine, using AWS Lambda. This keeps the data within Amazon data centers. If your main concern is to avoid downloading data out of AWS to your local machine, then of course you could download the data onto a remote EC2 instance and do the work there, with or without s3fs. Con The Unarchiver podrás extraer el contenido de prácticamente cualquier archivo comprimido, simplemente haciendo doble clic o enviándolo al icono de The Unarchiver. This still requires the files to be downloaded and uploaded, but it hides these operations away behind a filesystem interface. The Unarchiver es un descompresor de archivos compatible con la gran mayoría de formatos disponibles, tanto en OS X como en otros sistemas operativos. You could mount the S3 bucket as a local filesystem using s3fs and FUSE (see article and github site). S3 isn't really designed to allow this normally you would have to download the file, process it and upload the extracted files.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed